With their experience in building games and game services for the past 15 years, Endava know game production pipelines are about efficiency as much as creativity. Therefore, the company’s specialists think unlocking the capabilities of machine learning (ML) will elevate games production teams to new heights. Professional tools like Unity’s ArtEngine already make use of ML in interesting ways to improve texture work. Endava wanted to take it one step further and also look at mesh creation and alteration and see how this can either be used as creative input for artists or directly facilitate the creation of 3D assets.

At Endava, the team helps game companies create better games with our service offerings. They cover the full game development lifecycle, including concept, art, development, automated testing and DevOps. However, the company also helps their clients innovate and improve their own tools and pipeline. To showcase Endava’s advanced research and development (R&D) capabilities, the team initiated a project that implements machine learning in the context of creating assets for game productions.

To investigate this, Endava formed a team of data scientists, ML ops, 3D artists and UI developers for about two months and started researching scientific papers that use neural networks to simultaneously generate texture and augment meshes.

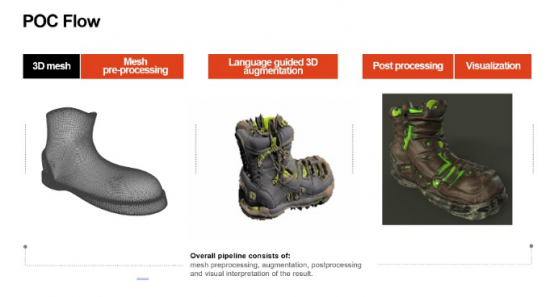

After selecting StyleGAN, StyleCLIP and Text2Mesh as the most promising candidates, they built a working implementation of an AI-aided asset creation journey. Based on voice-guided user input, the solution transforms existing mesh data into new creations. The team set out to evaluate where this approach is promising and where it hits limitations in its current form. They also improved the usability and applicability of this solution and evaluated how 3D artists would be able to work with this solution.

Text2Mesh is a method that takes as an input a 3D mesh with a carefully constructed topology and augments its geometry and texture, striving to match the textual description provided by the user. The mesh evolution respects the semantic meaning of each part of the object and is determined by a set of parameters that model the accuracy of the details, the magnitude of the deformation or the number of iterations, among others. In the example below, it grew laces in the proper position and produced spiked soles for hiking boots.

The ability of Text2Mesh to develop a meaningful geometry and texture in 3D makes it a powerful tool in the hands of the company’s experienced data scientists and enables the creation of tools that can save many hours of work for visual artists.

Endava’s team wanted to explore if 3D artists see value in using a neural network that specifically adds details to a basic 3D shape or its texture as an inspiration for creating interesting assets. How much influence would an artist want, and how would they want to interact?

Inspiration is often led by finding value in the unexpected. First and foremost, the solution is useful to create unexpected results. Based on the directional input given by a user, the solution adds features to the shape and texture of a mesh, which leads to interesting and inspirational outcomes. Have you ever wondered what a broccoli horse looks like? Neither did we, but now we know for sure! Let’s have a look at some examples:

Endava’s specialists concluded that the solution could likely be used with some additional development. The artists really valued not having to start with a blank page, and even the weirdest AI creation, like this cookie dough alien shape augmentation, can spark creativity. Their UI interface and some post-processing helped immensely to improve the accessibility of this tool for the 3D artists.

For example, one of Endava’s artists used this boot that was augmented with certain patterns and shapes and turned it into a proper asset. Which leads us to the next segment.

Building on the former approach, the team wanted to find out how usable the created assets are out of the box as a starting point for high-quality game assets. What auto-processing could they add to facilitate pipeline integration and actually make the artist more productive, specifically when variations or skins of an asset are needed?

To make the assets more useful for production purposes, the team built in algorithms to generate more useful results. This mostly meant ensuring that the topology of the mesh is fit for purpose, that the resulting UV map can be used in later stages of production and that the mesh transformation can be adjusted by the user. Also, they implemented a prototypical UI to make it easier for artists to run their own augmentations through the browser.

For certain assets, the approach could already help make artists more efficient and give them a basis to work with. However, the method should allow for more specific direction and better control of the way it augments the models thematically. Moreover, there are clear limitations to the quality of the mesh output of the implementation. While there is a clear benefit to keeping the original mesh complexity because mapping and even animating the input object could be kept consistent, this is also why the augmentation of the mesh hits clear limits. To avoid this, it is possible to recognize automatically when spikes in the mesh are appearing and avoid spending computational resources. It might be beneficial to look into a core method that is able to add complexity to the mesh where the objects are more strongly altered.

Could Endava’s specialists even come close to using generated assets as part of a crafting or breeding mechanic to create individualised skins or even mesh variations for unique player items? Would a direct integration without manual editing be feasible?

In the near to mid-term future, the team sees this solution not only working as a tool for asset creation or alteration but also as a feature that can be directly integrated in a game. Think crafting or breeding mechanics where the player can combine the characteristics of two individual assets into a brand-new form.

According to Endava, in its current state, the selected method has only very limited use – and only if the game can accept the weird-looking results as part of its identity. However, the team believes there is great potential to develop a custom solution specifically for these kinds of games where the uniqueness of an asset or item could have huge value for the game economy.

Applying machine learning in the context of game production has a bright future, and Endava’s specialists are certain that the value it can create today is to be found in all stages of the software development lifecycle. If used to its full potential, it can create value especially where classic automation fails.

While this was an exciting experiment for the company’s team – more than anything else – they see this as a way of showcasing how they are able to help the clients with their research and setting up interdisciplinary teams with great expertise in a wide range of capabilities stretching beyond core game development services.